HERMES++: Toward a Unified Driving World Model for 3D Scene Understanding and Generation

Generated by JarvisForResearchers Bot on 2026-05-01

TL;DR

HERMES++ establishes a unified driving world model by integrating 3D scene understanding and future geometry prediction within a single framework. This is achieved by leveraging a Bird’s-Eye View (BEV) representation and employing LLM-enhanced world queries to condition geometric evolution on semantic context, all while maintaining structural integrity via a Joint Geometric Optimization strategy.

The Problem

Existing driving world models exhibit a functional dichotomy. On one hand, many focus heavily on future scene generation, often neglecting a comprehensive, explicit understanding of the current 3D scene state. On the other hand, while Large Language Models (LLMs) excel at semantic interpretation, they inherently lack the capacity to predict the precise future geometric evolution of a physical scene. This creates a fundamental disconnect: we have sophisticated semantic interpreters that cannot predict physical outcomes, and geometric predictors that lack deep semantic grounding. Furthermore, supervision derived solely from future observations often imposes only explicit constraints, which is insufficient to guarantee the structural consistency of the predicted scene evolution.

Key Contributions

We contribute three primary advancements: 1. Proposing a unified framework that effectively integrates 3D scene understanding and future geometry prediction using a unified representation. 2. Devising a Joint Geometric Optimization strategy to enforce structural integrity by combining explicit geometric constraints with implicit geometric regularization on the latent manifold. 3. Introducing LLM-enhanced world queries and Textual Injection to facilitate knowledge transfer, allowing semantic reasoning to directly guide future scene evolution.

How It Works

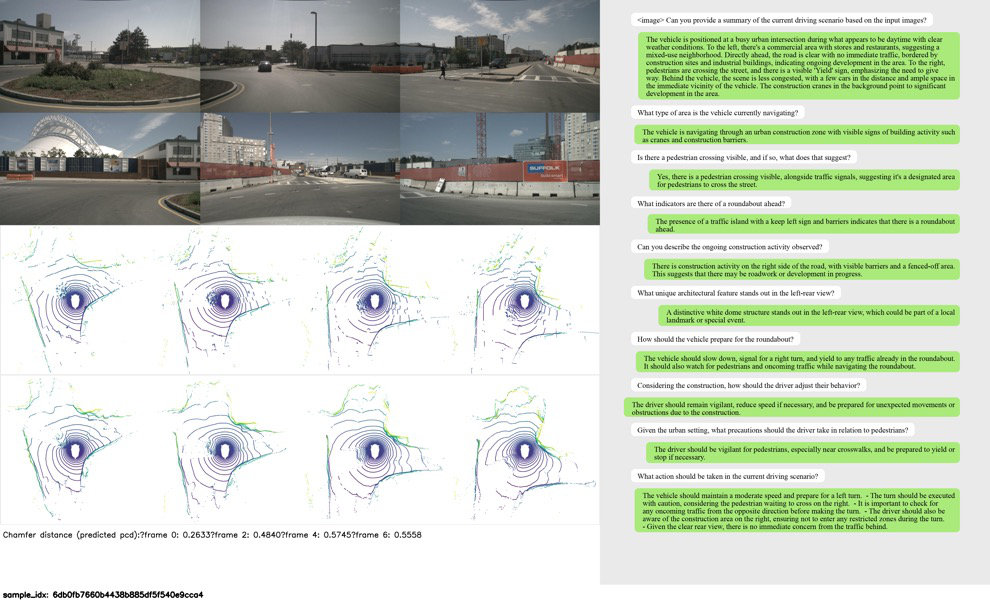

Fig. 1: (a) Previous driving world models focus on generative scene evolution prediction. (b) Large language models for driving

are limited to scene understanding. (c) The proposed framework unifies 3D scene understanding and scene evolution generation

with the BEV representation. (d) Quantitative c

Fig. 1: (a) Previous driving world models focus on generative scene evolution prediction. (b) Large language models for driving

are limited to scene understanding. (c) The proposed framework unifies 3D scene understanding and scene evolution generation

with the BEV representation. (d) Quantitative c

Fig. 1: (a) Previous driving world models focus on generative scene evolution prediction. (b) Large language models for driving

are limited to scene understanding. (c) The proposed framework unifies 3D scene understanding and scene evolution generation

with the BEV representation. (d) Quantitative c

Fig. 1: (a) Previous driving world models focus on generative scene evolution prediction. (b) Large language models for driving

are limited to scene understanding. (c) The proposed framework unifies 3D scene understanding and scene evolution generation

with the BEV representation. (d) Quantitative c

Fig. 1: (a) Previous driving world models focus on generative scene evolution prediction. (b) Large language models for driving

are limited to scene understanding. (c) The proposed framework unifies 3D scene understanding and scene evolution generation

with the BEV representation. (d) Quantitative c

Fig. 1: (a) Previous driving world models focus on generative scene evolution prediction. (b) Large language models for driving

are limited to scene understanding. (c) The proposed framework unifies 3D scene understanding and scene evolution generation

with the BEV representation. (d) Quantitative c

HERMES++ operates by first consolidating multi-view spatial information into a Bird’s-Eye View (BEV) representation, which serves as a structure amenable to interaction with LLMs. Semantic context is then injected into the temporal prediction pipeline via LLM-enhanced world queries. These queries aggregate rich world knowledge from text tokens and interact with BEV features through the Current-to-Future Link. This link is crucial as it conditions the geometric evolution on both semantic context (via Textual Injection) and ego-motion. Finally, the predicted latent representations are decoded into geometry using a differentiable renderer, with the entire process constrained by a Joint Geometric Optimization strategy that ensures geometric fidelity.

BEV representation

The BEV representation serves as the canonical, spatially coherent substrate for the entire model. It consolidates disparate multi-view spatial information into a unified grid structure. This transformation is executed by a Visual Tokenizer, which employs cross-attention mechanisms to map high-dimensional image inputs into the BEV space, thereby compressing the input while preserving necessary spatial relationships for downstream processing.

Visual Tokenizer

The Visual Tokenizer is responsible for the initial spatial encoding. It takes multi-view images and transforms them into the BEV space. This transformation is achieved via cross-attention, which allows the model to fuse information across different camera perspectives into a single, unified spatial representation, effectively compressing the input while maintaining geometric fidelity.

LLM-enhanced world queries

These queries are the mechanism for injecting high-level semantic knowledge into the geometric prediction pipeline. They leverage causal attention to aggregate rich world knowledge derived from input text tokens. By processing these tokens, the queries generate context-aware embeddings that represent the current semantic understanding of the scene, which is then utilized to guide the temporal evolution.

Current-to-Future Link

This component bridges the temporal gap between the current state and future timesteps. It propagates the encoded BEV features forward in time. Crucially, this propagation is conditioned not only on ego-motion but also on the enriched queries and text embeddings. The Textual Injection mechanism is embedded within this link, allowing the semantic context derived from the LLM to directly modulate how the geometry is predicted to change over time.

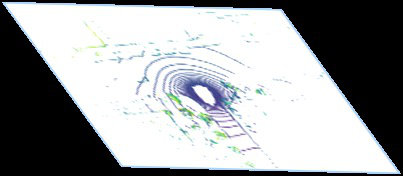

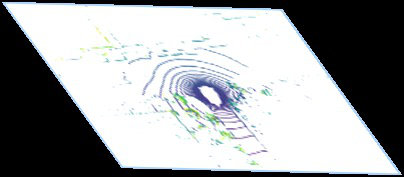

Joint Geometric Optimization strategy

To prevent the predicted latent manifold from drifting into physically implausible configurations, this strategy enforces structural integrity. It operates by simultaneously minimizing two objectives: first, explicit geometric constraints applied directly to the reconstructed point clouds, and second, an implicit geometric regularization applied to the latent manifold itself. This dual constraint ensures that the predicted geometry adheres both to observable physical laws and to learned geometric priors.

differentiable Render

The differentiable Render module serves as the geometric decoder. It takes the feature representations predicted by the temporal network and maps them back into a tangible geometric output, specifically point clouds. The differentiability of this module is essential, as it allows gradients from the geometric loss functions (like those in the Joint Geometric Optimization strategy) to flow back through the rendering process into the latent space, enabling end-to-end geometric training.

Results

| Metric | Value | Baseline | Source |

|---|---|---|---|

| 3s point cloud generation error | 8.2% reduction | DriveX [18] | Abstract |

| CIDEr metric (understanding task) | 9.2% outperformance | Omni-Q [15] | Abstract |

| generation error | 13.7% reduction | conference version | Section 3 |

Why This Matters for Robotics

The integration demonstrated by HERMES++ addresses a critical bottleneck in deploying perception-to-action pipelines in autonomous systems. By unifying semantic understanding (the "what" and "why") with predictive geometry (the "where" and "how"), the model moves beyond mere scene reconstruction toward genuine world modeling. This capability is vital for robust planning, as a system can now reason about why a scene will evolve a certain way based on semantic cues (e.g., "the pedestrian will cross the street") and then predict the precise geometric trajectory required for safe navigation.

Limitations & Open Questions

The current formulation is constrained by its input modality, relying exclusively on multi-view images for scene input and producing point clouds as output. Furthermore, the paper does not explicitly detail limitations beyond the inherent challenges addressed by the proposed components, leaving open questions regarding the scalability of the LLM integration to extremely long-horizon predictions or the robustness of the Textual Injection mechanism under highly ambiguous semantic inputs.

Citation

Paper: 2604.28196

@article{260428196,

title = {HERMES++: Toward a Unified Driving World Model for 3D Scene Understanding and Generation},

author = {Xin Zhou and Dingkang Liang and Xiwu Chen and Feiyang Tan and Dingyuan Zhang and Hengshuang Zhao et al.},

journal = {arXiv preprint arXiv:2604.28196},

year = {2026},

url = {https://arxiv.org/abs/2604.28196}

}